- Client

- BMW Garching

- Content

- Road, building and landscape scanning

- Related references

- 3D-Scan of soccer stadium

- Tags

- Related references

- 3D surveying services

- 3D laser scanning

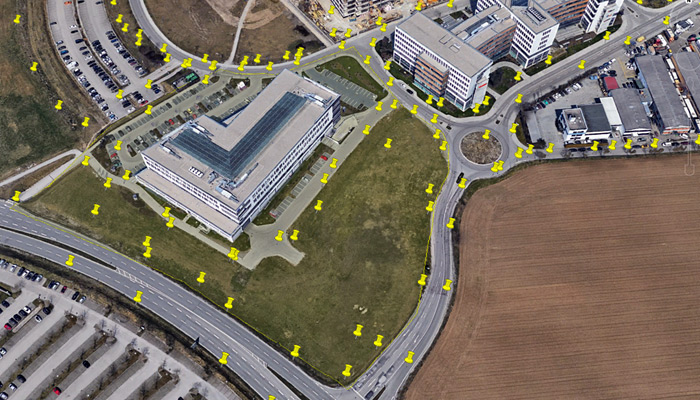

In 2017-2018 we carried out a comprehensive, technically challenging and innovative 3D scanning project in combination and fusion of airborne and terrestrial 3D scanning. The results served the research department of BMW for autonomous driving and driver assistance systems.

Thanks to our long-standing and intensive cooperation with RIEGL, terrestrial mid- and long-range laser scanners (VZ-400 and VZ-2000i) were skillfully used with great success in this project.

In this project, a total area of more than 240 hectares was measured and modeled three-dimensionally and photorealistically with very high accuracy.

Foto right: BMW – Section of Lidar data

The results of our work were used and evaluated for further analysis in a BMW-sponsored dissertation by Alexander Schaermann(1). Schaermann used methods of simulation and validation especially for testing and securing driver assistance systems with comprehensive sensor technology.

As a basic as-built documentation, we have developed three-dimensional virtual representations of the real environment in which virtual objects can move during the simulation. Since Schaermann’s validation is based on the comparison of real and simulated data, our task was to create an exact virtual replica of a real track section. In this way, maximum comparability between the real and virtual world was achieved. BMW placed particularly high demands on the environment modeling in regards to the accuracy of the model geometry and georeferencing. In addition, the environment model to be created had to be photorealistic in order to be able to check models of imaging sensors (especially the cameras integrated in the vehicles).

In order to be able to investigate various driving scenarios on different road types, an area in the west of Garching was defined, where urban areas, country road and freeway are close to each other. For us, three precisely coordinated documentation steps were necessary to fulfill the task: 1. survey of the entire area with different, combined 3D sensors 2. fusion of the recorded measurement data (dGPS and IMU, laser scanning & photogrammetry) and 3. modeling and converding the recorded data into a suitable format.

"BMW placed particularly high demands on the environment modeling in regards to the accuracy of the model geometry and georeferencing."

The end result is that any number of virtual car ride can now be carried out in this highly accurate simulation environment. Since the environment model to be created has to be georeferenced, the respective measurement devices (laser scanners and cameras) required corresponding GNSS sensors. Within the scope of terrestrial photogrammetry, photographs were taken from the ground or from a mobile stage and in small areas with a photogrammetry drone. Mainly the city area was surveyed with close range scanners.

For airborne photogrammetry we used a helicopter to survey the remaining areas, mainly fields, the highway and country roads. During data fusion, the dGNSS data from the reference stations, the point clouds from laser scanners and the photogrammetry images were jointly evaluated to improve the geoposition and model quality. The verification of the achieved accuracies was done by measuring an extensive network of reference points (accuracy requirement: absolute: <5cm; relative: <2cm).

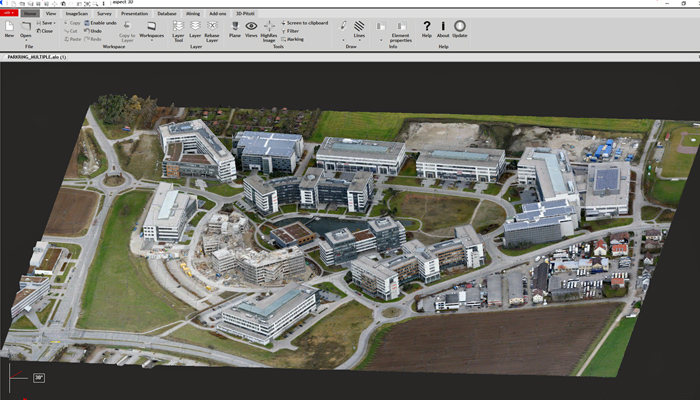

Finally, the surveyed environment was modeled in 3D until the desired level of detail (LoD 300) according to Building Information Modeling (BIM) definition was reached. Thereafter the 3d model was textured with photorealistic textures or matched PBR materials. Finally, we optimized the number of polygons for the real-time capability of the simulation and compared the finished model with the point cloud from the fusion step for quality assurance purposes.

For the virtual environment used at BMW in the research project, the completed model could be exported in formats suitable for the simulation environment (OpenFlight (FLT) or OpenSceneGraph (IVE)). At BMW, numerous research tasks related to the analysis and visualization of real vehicle sensors were then developed in a virtual environment.